Indian Prime Minister Narendra Modi in a group photograph along with global tech leaders at the Opening Ceremony of India AI Impact Summit– 2026 at Bharat Mandapam, in New Delhi on February 19, 2026. Image via Wikipedia by the Prime Minister’s Office, licensed under the Government Open Data License – India (GODL).

This article is part of the series “Don’t ask AI, ask a peer,” a collaboration among Global Voices, the Association for Progressive Communication, and GenderIT. The series aims to re-emphasise the importance of knowledge sharing among people, as has been done for decades. You can follow the series on APC.org, GenderIT.org, and globalvoices.org. It is also part of Global Voices’ April 2026 Spotlight series, “Human perspectives on AI.” You can support this coverage by donating here.

India’s current AI governance framework encompasses soft guidelines, an unenacted ethics bill, and data protection rules. And together, they create a system where AI can be deployed first and questioned later, because the framework emphasises responsible AI but imposes no obligation of transparency or pre-deployment impact assessments.

The rise of AI-enabled monitoring, such as facial recognition systems at railway stations and predictive policing technologies used by police across different cities, has continued largely without sufficient legal protections, raising concerns about human rights, privacy, dignity, and civil liberties. “Responsible AI” without accountability is merely an aspiration. Although the framework talks about a people-first approach, human rights must be the starting point of AI governance, not a consideration to be layered on afterwards.

In February, India organised a five-day-long India AI Impact Summit 2026 in New Delhi, which brought together global leaders, Big Tech platforms, civil society actors and policymakers to explore the future of AI governance and cooperation. The event underlined AI technology’s expanding economic and geopolitical significance as it continues to transform global economies. The summit focused on inclusive growth, ethical deployment, and AI use across sectors such as healthcare, agriculture, and education.

In a statement published on February 20, Human Rights organisation Amnesty International contrasted the claims of the AI Impact Summit with how AI is being used in India. Amnesty International stressed that technologies like AI-automation and facial recognition are restricting civic space, aiding state surveillance, and disproportionately affecting the poor and the marginalised.

The event itself was effectively used as a live demo of India’s expanding AI‑driven surveillance grid in Delhi. Supposedly to maintain security of the summit location, Delhi Police installed 500 security cameras at the international exhibition centre and turned central Delhi into a “digital fortress” with more than 4,000 AI‑enabled cameras deployed across the city.

These cameras used facial recognition to analyse live video feeds, matching faces against police databases of “suspected individuals,” repeat protesters, and people flagged for public-order concerns, with alerts sent instantly. The AI-driven surveillance network was coordinated through 32 control rooms, supported by facial recognition systems (FRS), real-time video analytics, and AI-enabled smart glasses used by officers on the ground. More than 20,000 personnel relied on the system to monitor crowd density, movement patterns, and potential “troublemakers” in and around the AI summit in real time.

Perils of AI-enabled state surveillance

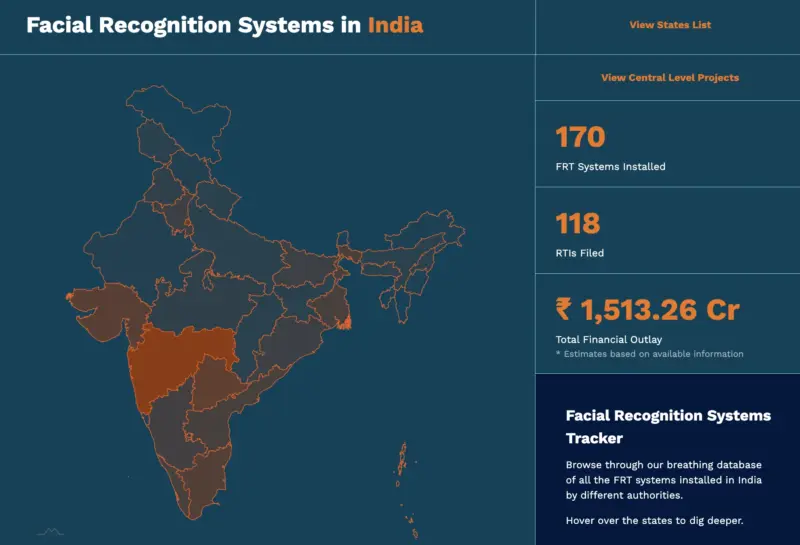

In order to monitor the adoption of facial recognition technology in India, the rights-based organisation Internet Freedom Foundation (IFF) started Project Panoptic in 2020. By 2024, it created the most comprehensive public database on facial recognition technology use in India after recording more than 120 government contracts.

Indian legal services non-profit SFLC.in recently published an overview of incidents relating to AI and surveillance in India that took place in 2025. The roundup highlights the usage of the technology in various domains, such as AI-driven surveillance in travel.

Screenshot of the Facial Recognition system in India tracker . Hosted by Project Panoptic by IFF. CC-BY

The government-backed, AI-based facial recognition app DigiYatra, positioned as a flagship digital aviation effort, combines a passenger’s Aadhaar ID, boarding card and face biometrics to enable “seamless” paperless travel at Indian airports. Although on paper, DigiYatra is opt‑in and “not mandatory,” IFF says that airline passengers across India are being ambushed and pushed into enrolling.

IFF further says that DigiYatra has a weak data system and a murky ecosystem — there’s no transparency around what data is stored, how, or where, or who has access. The service is run through the Digi Yatra Foundation, and around 75 percent of its shares are owned by private companies, placing it outside the ambit of the Right to Information Act, 2015 (RTI Act) for accountability.

AI-enabled CCTV surveillance is also increasingly being deployed in exam halls across India to monitor students. These technologies track gestures, cell phone use, and facial indicators such as unusual eye movements, sending rapid notifications to proctors. By 2025, such technologies had been applied to school board exams and important competitive tests in states such as Uttar Pradesh, Bihar, and Karnataka.

SFLC.in says AI-driven surveillance is increasing across India, with insufficient information about oversight and data retention, or means to contest misidentification. As such, technologies become part of public infrastructure, consent is increasingly ignored, and human rights concerns receive minimal consideration.

Deployment of AI technology is impacting millions

According to an investigation by Decode, a distinct investigative unit of BOOM, one of India’s leading independent digital journalism and fact-checking organisations, AI-driven facial recognition is failing to recognise women whose faces change due to pregnancy, illness or ageing, thereby denying them access to public facilities and verification. Facial recognition technology in the Poshan Tracker, a government app, failed to match their current appearance to years-old Aadhar ID photographs.

India’s Integrated Child Development Services (ICDS) programme is responsible for feeding approximately 47 million pregnant women, nursing mothers and young children. In July 2025, the AI facial recognition scan for identification was introduced. By the end of 2025, almost half of the people who were supposed to receive food hadn’t gotten it, because the system simply couldn’t recognise their faces.

Facial recognition struggles in poor lighting, on low-end phones, and most critically, on darker skin tones. That last flaw is well-documented, yet nobody seemed to ask whether it mattered before rolling this out to some of India’s most marginalised communities.

Indian regulations on AI

India does not yet have a dedicated, binding AI statute; instead, it relies on a web of existing laws such as the IT Act, intermediary rules, and data protection, plus governance guidance. The India AI Governance Guidelines introduced by the Ministry of Electronics and Information Technology of India (MeitY) in November 2025 adopted a non-interventionist model, emphasising responsible AI, risk‑based oversight and protection against harms, but they are non‑binding soft law.

A new Artificial Intelligence (Ethics and Accountability) Bill, 2025, proposes that the government establish an Ethics Committee, mandatory ethical reviews, bias audits, restrictions on certain uses (e.g., surveillance, high‑risk employment decisions) and grievance mechanisms, signalling an explicit human‑rights‑style focus, but it has not yet been enacted.

India’s Digital Personal Data Protection framework (implemented via DPDP Rules 2025) imposes consent‑centric obligations, purpose limitation and data minimisation on AI developers and deployers, indirectly protecting privacy and related rights in AI contexts.

The Indian government is doubling down on intermediaries to swiftly remove AI-generated deepfakes used for defamation, impersonation, deception, or the non-consensual portrayal of sexual content on social media platforms. However, even when platforms comply, the harm is often already done by the time such synthetic media is taken down.

Current human rights approach to AI

UN human rights officials are increasingly arguing that international human rights law, which should serve as the central framework for governing artificial intelligence, emphasises inclusivity, accountability, transparency, and the need to address power imbalances. This reflects a larger trend extending beyond the voluntary discussion of “AI ethics” into the area of legal obligations, such as restrictions and corrective measures. The UN High Commissioner for Human Rights has called for moratoriums on AI applications that violate fundamental rights, like certain forms of biometric surveillance, as well as mandatory human rights due diligence at every stage of the development and implementation of AI-enabled services.

Similarly, in a joint statement from 2025, the Freedom Online Coalition urged governments to ensure business due diligence, enforce protections in high-risk situations, and require human rights impact assessments, particularly regarding risks such as political manipulation, disinformation, and profiling. Some observers have suggested a SAARC-wide regulatory framework based on the EU AI Act, with clear protections for fundamental rights and improved transparency and accountability procedures. However, this is merely a policy recommendation rather than a proposed legally binding law.

The way forward

According to the Ontario government’s Principles for the Responsible Use of Artificial Intelligence, human rights obligations must be incorporated into the design of AI systems, not added on after the fact. The Council of the European Commissioner for Human Rights recommends that human rights impact assessments ought to be required before high-stakes AI systems are deployed. It should be mandatory for governments and businesses using AI in public-facing roles to reveal the systems they use, the data they train on, and the error rates. Specific legal frameworks are needed to determine when AI systems are considered legal entities for liability purposes and what obligations of care their developers and users have to those impacted by them. The problem arises when frameworks exist that cannot be implemented easily, or exclude state use of AI.

MeitY’s IndiaAI Mission, a national programme approved in March 2024, aims to build a full-stack AI ecosystem in India through the initiative “Safe & Trusted AI projects”. Eight responsible AI projects encompassing themes such as bias mitigation, ethical AI frameworks, privacy‑enhancing tools, and explainable AI and five additional projects targeting deepfake detection have been undertaken so far. On paper, MeitY’s IndiaAI initiatives align with human‑rights principles. However, rights groups argue this falls short of a true human‑rights framework because safeguards are voluntary, oversight is weak, and high‑risk state AI (surveillance, welfare automation, predictive policing) continues without binding protections or effective remedies.

Human rights activists suggest that India should enact specific legislation for AI-enabled surveillance, such as policing algorithms, enforcing transparency, and enforcing oversight of surveillance systems, in order to reduce the risk of digital authoritarianism.