A smartphone showing a social media post in Ge’ez script with an automated warning overlay, symbolizing the challenges of moderating content in African writing systems. Photo by Pride Chamisa. Used with permission.

By Pride Chamisa

This post is part of Global Voices’ April 2026 Spotlight series, “Human perspectives on AI.” This series will offer insight into how AI is being used in global majority countries, how its use and implementation are affecting individual communities, what this AI experiment might mean for future generations, and more. You can support this coverage by donating here.

Bereket Tsegay spent his days watching videos he did not understand.

He was hired to moderate content on TikTok at the company’s Kenya hub, one of the main centers for AI-assisted content review across Africa. He spoke Amharic, Ethiopia’s official language. But the videos in his queue came from across the continent in languages like Luo, Dholuo, Kikuyu, Dinka, and dozens more. When nothing in the visuals looked obviously wrong, and nobody had reported the video, he usually left it up. When it had been reported many times, he took it down. He has since left the job, and he is candid about what he saw: the system was doing its best with almost no real understanding of the content it was judging.

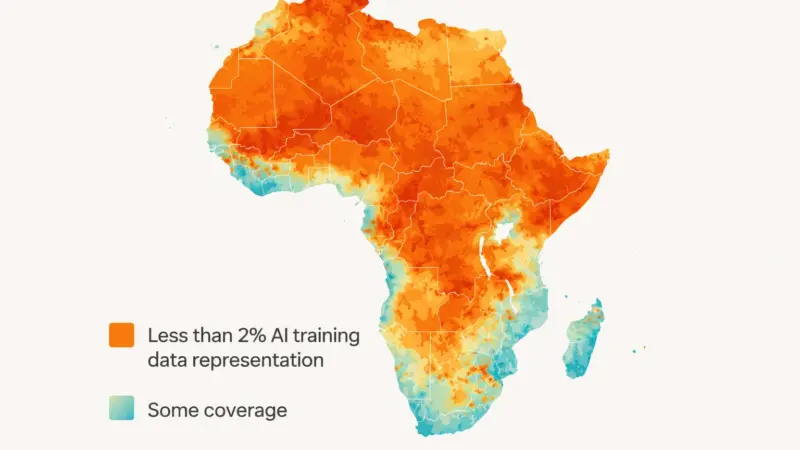

His account, reported in the Christian Science Monitor in March 2026, is one snapshot of a much wider problem. Africa has more than 2,000 languages. The AI systems that moderate content across the continent were built primarily on English-language data, with some coverage of a handful of global languages. A 2025 study, “The State of Large Language Models for African Languages,” comparing major language models found that only 42 African languages appear in any meaningful way across the systems reviewed. Just four languages, Amharic, Swahili, Afrikaans, and Malagasy, are handled with any degree of consistency. That leaves more than 98 percent of Africa’s languages essentially invisible to the moderation systems that decide what stays up and what gets removed.

The consequences fall on real people.

The language of the algorithm

Jackson Busolo is a Kenyan TikTok creator who posts in Swahili, mostly about politics. One morning in February 2025, he woke up, and his account was gone. No warning, no explanation. He appealed, and eventually the account was reestablished. He never found out why it was taken down or restored.

His case is not unusual. According to TikTok’s Q1 2025 Community Guidelines Enforcement data, as reported by Business Daily Africa, between January and March 2025, TikTok removed more than 450,000 videos from Kenya alone and banned over 43,000 accounts. By the second quarter, removals had climbed to 592,000. The platform attributes most of this to automated systems. TikTok told the Christian Science Monitor that it uses a combination of technology and human moderation across many languages and is constantly expanding its coverage. But the platform declined to say which African languages its AI moderation tools actually cover.

When a moderation system cannot process a language, it is less likely to flag content for human review. Instead, it relies on indirect signals such as user reports, visual cues, or audio patterns from languages it does recognize.

Mercy Mutemi, executive director of the Oversight Lab, a Kenyan legal advocacy group focused on technology, put it plainly. She said:

We are talking about an algorithm trained predominantly in English, being trusted to take down harmful content, while a huge percentage of TikTok users in Kenya are using TikTok in their mother tongue.

The problem is not only false positives, but also content that gets removed when it should not. There are false negatives too: harmful content in languages the system cannot parse, which stays up because nothing in the video triggers a review. In Ethiopia, false claims circulating on Facebook alleged that Ethiopian troops had seized Eritrea’s Red Sea port, spreading widely before being debunked by fact-checkers. Researchers have documented the same dynamic repeatedly. Swahili hate speech goes undetected. Moderation gaps in low-resource languages such as Hausa. Local language posts are misclassified by systems trained primarily on English.

Ethnographic research with UX practitioners across six African countries found that large language models trained predominantly on English often struggled with African language input. In one example, including even a single Yorùbá word in an otherwise English prompt produced inaccurate outputs, ranging from partial mistranslations to unrelated responses, highlighting limitations in how these models handle multilingual and culturally specific text. What happens when that same model is asked to judge whether a post violates community standards?

A heat map of Africa showing the “data desert”: orange areas represent regions with less than 2 percent representation in global AI training datasets, while teal highlights pockets of coverage concentrated around major urban and tech hubs. Photo by Pride Chamisa. Used with permission.

Who bears the cost?

The burden of a moderation system that cannot read African languages is not shared equally. It falls hardest on creators, journalists, and ordinary users who communicate in those languages.

For creators, it means building an audience in a context where the algorithm is indifferent to the actual content of your work and responsive mainly to English-language signals. Pauline Onyango, another Kenyan creator, found that months of posting in the Luo language produced almost no algorithmic traction. Her content was effectively invisible. This is not only a fairness problem. It shapes what gets made, what gets amplified, and whose stories reach audiences.

For journalists and civil society, it means that disinformation in African languages can gain more traction. Platforms with hundreds of millions of users on the continent are slower to act on harmful content in languages their systems cannot parse. Fact-checkers interviewed by Poynter described spending hours manually tracking Amharic-language Facebook posts during periods of political tension in Ethiopia, doing work that should have been caught by the platform’s systems.

For platforms themselves, there is a compliance dimension that has gone largely undiscussed. The EU AI Act, which entered into force in August 2024, requires that AI systems be non-discriminatory and that training data be representative of the populations the system will affect. The Digital Services Act (DSA), already in force since February 2024, requires platforms to explain content moderation decisions to affected users. If a system cannot identify the language a post is written in, it cannot produce a meaningful explanation for why that post was removed. These are not hypothetical future obligations. They apply now to any platform with European users, and African-language communities are present and active in Europe.

What is actually being done?

There is work happening, but it is scattered and chronically under-resourced.

Research groups like AfricaNLP, a workshop series affiliated with major computational linguistics conferences, are producing multilingual datasets, benchmarks, and models for African languages. The 2025 AfricaNLP workshop included work on hate speech detection in Hausa and Igbo, Swahili news classification, and speech recognition for low-resource languages. Academic teams at universities in Pretoria, Nairobi, and Addis Ababa are building training data for languages that have almost none.

Some commercial efforts are following. Cohere, a Canadian artificial intelligence company that develops large language models, partnered with HausaNLP to integrate African language datasets into its multilingual Aya model. The data-labeling industry, worth an estimated USD 2.8 billion globally, relies heavily on workers in Kenya, Nigeria, and other African countries to annotate the data that AI systems learn from. Those same workers rarely see their languages reflected in the outputs of the systems they help train.

The African Union’s Continental AI Strategy, approved in July 2024, commits to a people-centric approach and names data sovereignty as a priority. The AU’s strategy and the national AI strategies that followed it, including Nigeria’s in April 2025, flag linguistic diversity as something that needs addressing. But strategy documents are not models. They do not, on their own, close the gap between what systems can do and what the continent’s languages require.

The data-labeling industry relies heavily on workers in countries like Kenya to annotate what AI systems learn. Those same workers rarely see their languages reflected in the systems they help train.

A fixable problem that nobody has decided to fix

The language gap in AI content moderation is not a mystery. It is a known problem with a known cause: the economics of building AI systems have historically favored languages with large amounts of digital text, and most African languages have very little. English dominates. French, Chinese, and Arabic have some coverage. Everything else is marginal.

What makes this moment different is that the regulatory pressure is building from outside Africa in ways that might finally force change. The EU AI Act’s non-discrimination obligations apply to training data. If a system is trained on data that does not represent the populations it will serve, deployers face potential compliance exposure. The DSA’s transparency requirements mean platforms need to explain their decisions, including decisions made by systems that may have guessed rather than understood.

None of this automatically fixes the problem. But it creates, for the first time, financial consequences for ignoring it. Platforms that have treated African-language coverage as a nice-to-have rather than a core requirement may find that position harder to sustain when regulators can ask for disaggregated performance data across languages and communities.

There is also an argument that does not depend on regulation at all. Africa is one of the fastest-growing regions for social media use. The platforms that want to grow on the continent over the next decade need to actually work for the people who live there. A moderation system that treats Swahili, Yoruba, and Amharic as edge cases is not a system designed for an African audience. It is a system designed for someone else, deployed in Africa.

That is a gap worth naming plainly. Not because naming it is enough, but because the first step to fixing a problem is deciding it is, in fact, a problem rather than an acceptable trade-off.

Disclosure: The author builds content moderation technology. The views and analyses in this article are his own and are drawn from publicly available research.

Pride Chamisa is an AI researcher and the founder of VidSentry, an AI platform building context-aware video moderation tools for African-language content. He is based in Cape Town and is a GradStar Top 100 recipient.