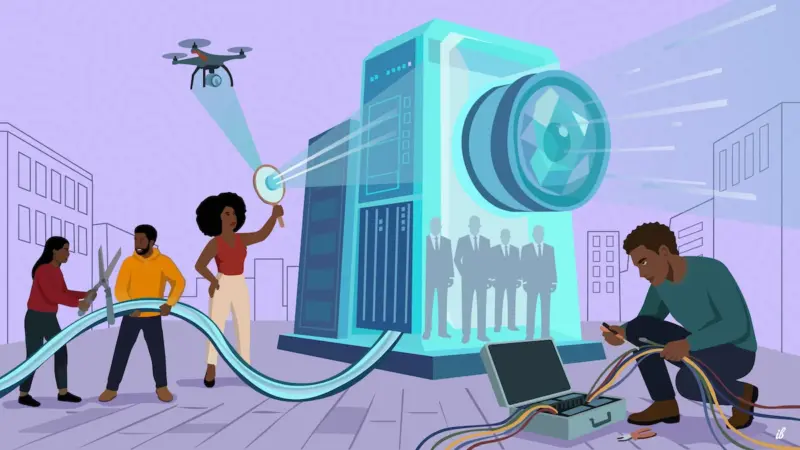

Image by Ibrahim Kizza for APC. Used with permission.

This article is part of the series “Don’t ask AI, ask a peer,” a collaboration among Global Voices, the Association for Progressive Communications, and GenderIT. The series aims to re-emphasize the importance of knowledge sharing among people, as has been done for decades. You can follow the series on APC.org, GenderIT.org, and globalvoices.org.

As one of the countries most infamous for using AI for surveillance and restricting citizens’ freedom, China cannot offer a direct answer to how to develop a human rights approach to AI. Yet, its open embrace of AI technology and its ambitions for dominance in the global AI market can serve as a case study for the burgeoning relationship between the private sector and the state, the machine and society.

In February 2026, a Spanish engineer, Sammy Azdoufal, found that he could take control of 7,000 smart vacuums, view real-time footage from people’s homes, and listen to the surrounding sound via the machines’ live cameras, and pinpoint the exact location of the households through their IP addresses simply by reverse engineering the connection between his newly purchased DJI Romo vacuum and his PlayStation gamepad.

While the mainland Chinese company fixed the security breach in no time, the incident highlights how easily AI-powered surveillance technology could penetrate into our daily lives and the risks it poses.

Universal threats accompany AI surveillance and war technology

The aforementioned smart vacuum is unavailable in the U.S. market because its manufacturer, DJI, was designated a “Chinese military company” in 2022 and was added to the Pentagon’s blacklist due to national security concerns.

Against this backdrop, discussions about AI-powered surveillance technology and the threats that accompany it have largely been limited to China, but this technology is universal, affecting people worldwide.

China is indeed a forerunner in leveraging sophisticated surveillance technologies to bolster its repressive governance, and has even exported the toolset to other authoritarian states, as has been widely documented by international media outlets. The Chinese experience, both in the so-called social management of Uyghurs in Xinjiang, China, and the nationwide COVID-19 control system, shows the world that human monitoring in the name of grid management and the application of surveillance technology — facial recognition, a social credit system, geolocation, and online data tracking — work in tandem to maintain a grassroots policing environment that successfully pre-empts possible destabilizing acts. The Orwellian reality shows that when equipped with a powerful AI engine, the state can consolidate its power at the expense of individual freedom of assembly, expression, and movement.

The global market of repressive technology

Such advanced repressive technology has a market reach beyond imagination. In China, AI-powered facial recognition systems have been used to issue jaywalking and illegal parking tickets, process payments online and offline, and monitor students’ classroom performance, among others.

While democratic states are skeptical of the Chinese application of AI surveillance, such views merely push them to purchase similar AI tools from non-Chinese sources. The U.K. police, for example, have recently bought the AI-powered facial recognition technology used by Israeli intelligence to identify and track Palestinian civilians passing through Gaza. The 50 AI facial recognition machines will be deployed nationwide to identify and track individuals on the U.K. police’s watchlist. In the U.S., AI technology has also been used in the massive surveillance and arrest of undocumented immigrants and the recent war against Iran.

On the digital front, AI’s impact on the media and information ecosystem has been devastating. The built-in censorship and political bias of China-made generative AI, DeepSeek, in spreading a pro-China narrative has been widely reported.

At the same time, Western generative AIs are also handy for spreading propaganda across the internet. As revealed by OpenAI’s transparency reports, Russian and Chinese information operators have used ChatGPT to analyze public opinion and tailor content to their target audiences. Recently, amid the U.S.–Israel war on Iran, war propaganda, in the form of AI-generated deepfake videos, has also flooded major social media platforms.

A minority of disinformation operators can now manipulate the majority’s perception of reality thanks to their AI assistants.

The need to rebalance power

China also has a vital lesson for the world on AI governance. Since 2022, it has rolled out a series of laws and regulations, including the Algorithmic Recommendation Provisions (2022), Deep Synthesis Management Provisions (2023), Generative AI Interim Measures (2023), and AI Labeling Measures (2025), to prevent the illegal and malicious use of AI and to ensure that machine-generated content aligns with China’s socialist core values. User rights regarding personal data protection, consent, and access have been fairly addressed, with additional requirements for corporate responsibility to enhance algorithmic transparency and non-discriminatory, non-addictive measures.

Yet, as the aim of the laws is to protect national security, public order, and political ideology, there is no restriction on state power. On Chinese social media, it is not uncommon to see users complaining that they had the police knocking at their doors simply because they bought something suspicious, such as a drone for taking photos. The social credit system tracks users’ online activity records, including financial transactions, social connections, and speech, and restricts their access to digital and public services, such as online payment systems and travel purchases, when their credit scores are low. Millions of people have been deprived of their rights due to this system.

The duties imposed by the laws on the corporate sector seem sound and fair. Yet, there are very few independent civil society organizations to monitor the private sector and ensure that technology companies have indeed implemented the protective measures required by law. As a result, security breaches and personal data leaks due to poorly designed AI products, such as the DJI Romo Vacuum, have persisted in recent years. In 2025, a major data breach exposed the records of billions of Chinese users, including names, ID numbers, passwords, and financial and banking details linked to their social media accounts. These records could be exploited by scammers to plot against the individuals involved.

Despite all the security risks, mainland Chinese citizens have been quick to embrace emerging technology, particularly AI. The latest example, which took place in early March across major Chinese cities, is the rush to install OpenClaw, an AI bot coded by Austrian programmer Peter Steinberger that integrates with other LLMs such as DeepSeek and Claude, runs on a local computer, and stores data locally. Jumping on the trend, other Chinese firms, including Tencent, ByteDance, and Xiaomi, rolled out similar products. Such tech fanaticism, driven by both the Chinese government’s policy to integrate advanced technology into the economy and people’s anxiety about being left behind amid rapid tech innovation, turns people into fans and followers of a Leviathan, to which people feed their time, energy, desires, ideas, and data with no idea about who controls it and where it might lead them.

Even though transparency and consumer rights protection have been written into Chinese AI laws, the human rights protection remains flat, as people are unaware of their rights and the potential harms that come with the abusive and malicious use of the technology. Instead, most mainland Chinese citizens, in the absence of choice, have accepted surveillance technology, such as the omnipresence of facial-recognition security cameras, as a normal and convenient feature of public life. The mechanism for change is blocked.

We can see in the Chinese AI dystopia that people are not in control of the technology, but they are subjected to a regime that sees technology as a means to expand and consolidate the state’s political and economic power. It shows us that the core of the human rights approach to AI should aim at rebalancing the power among the corporate-state, the machine, and the people through a decision-making framework that places people back at the center, and unwinding tech fanaticism through human-oriented research and public deliberation, reflective cultural practices, and humanity education.

Oiwan Lam is a regional editor at Global Voices covering news about East Asia.

Ibrahim Kizza is a visual artist, designer, and illustrator whose work explores human connection, identity, and culture. His illustrations are defined by bold compositions, expressive colour, and a strong narrative focus, often centring Black life and lived experience. Working across editorial and digital spaces, he creates art and illustrations that balance simplicity with emotional depth, using contrast and symbolism to communicate complex ideas with clarity. For this project, Ibrahim develops a visual response to the tension between artificial and human connection, reinforcing the value of lived experience and collective creativity in an increasingly automated world. Beyond illustration, his interests span design, sport, and film, which continue to inform his visual language and storytelling.